AI Inference-as-a-service Market Size 2026-2030

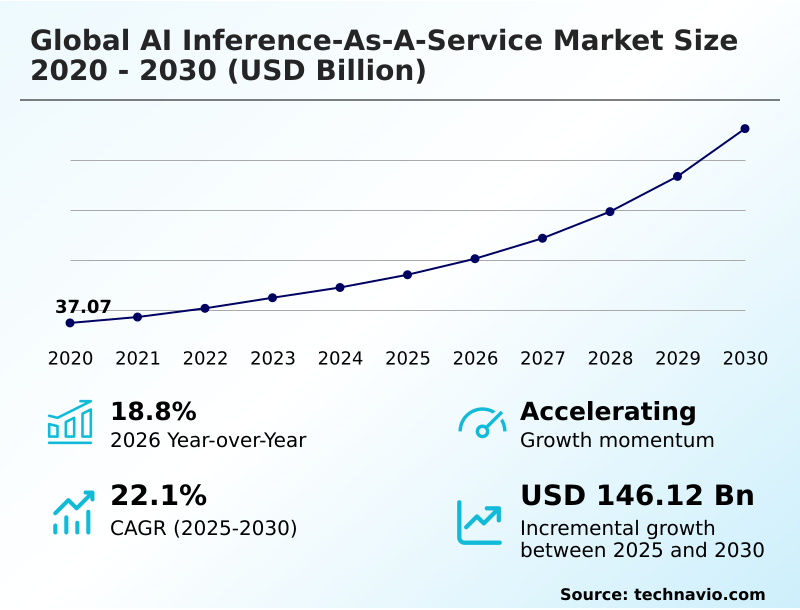

The ai inference-as-a-service market size is valued to increase by USD 146.12 billion, at a CAGR of 22.1% from 2025 to 2030. Proliferation and increasing complexity of AI models will drive the ai inference-as-a-service market.

Major Market Trends & Insights

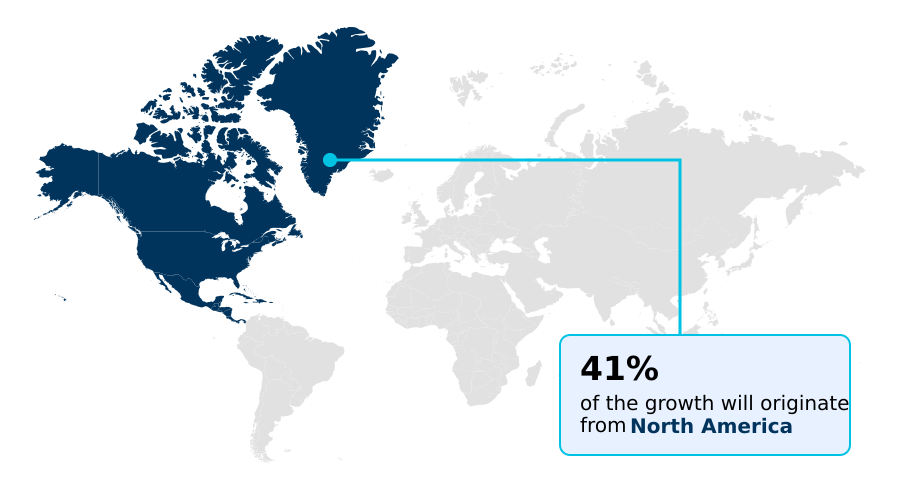

- North America dominated the market and accounted for a 41.1% growth during the forecast period.

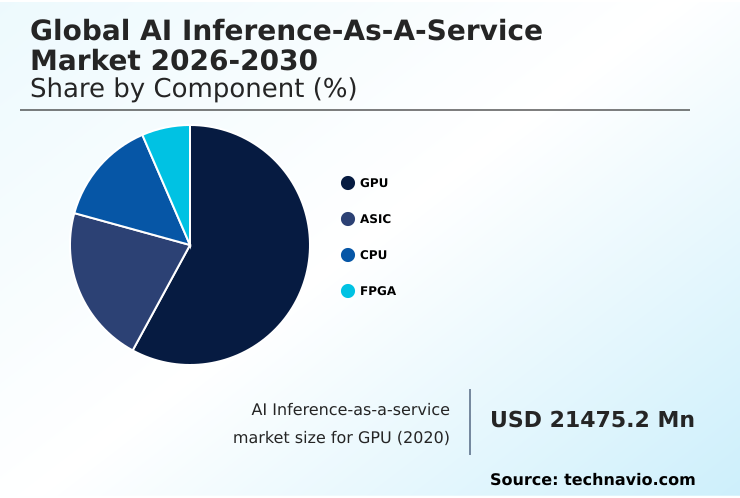

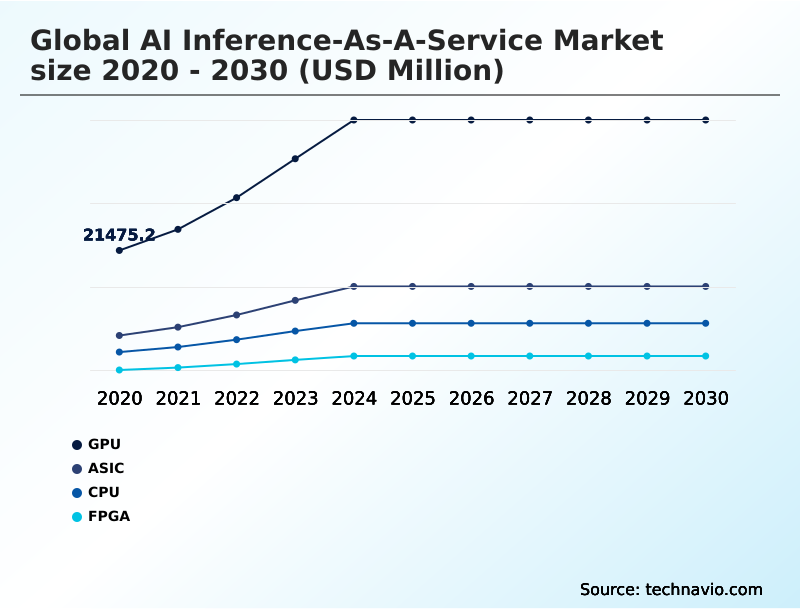

- By Component - GPU segment was valued at USD 42.28 billion in 2024

- By Type - HBM segment accounted for the largest market revenue share in 2024

Market Size & Forecast

- Market Opportunities: USD 194.30 billion

- Market Future Opportunities: USD 146.12 billion

- CAGR from 2025 to 2030 : 22.1%

Market Summary

- The AI inference-as-a-service market is rapidly expanding as organizations transition from model training to large-scale production deployment, seeking scalable and cost-efficient solutions. This shift is driven by the need to operationalize complex machine learning models, including generative AI and multi modal systems, which demand computational power exceeding most in-house capacities.

- The market enables businesses to leverage state-of-the-art AI accelerators and high bandwidth memory on a pay-as-you-go basis, eliminating significant upfront capital expenditure. For instance, a logistics company can utilize real-time processing to optimize delivery routes dynamically, analyzing live traffic and weather data to reduce fuel costs and improve delivery times.

- Key trends include the move toward serverless inference, which simplifies deployment, and the adoption of hybrid cloud strategies to balance performance with data security. However, challenges such as AI model portability and the high costs associated with advanced hardware persist, shaping the competitive landscape.

- This service model effectively democratizes access to powerful AI, fostering innovation across industries by making advanced intelligence a readily consumable utility rather than a capital-intensive asset.

What will be the Size of the AI Inference-as-a-service Market during the forecast period?

Get Key Insights on Market Forecast (PDF) Request Free Sample

How is the AI Inference-as-a-service Market Segmented?

The ai inference-as-a-service industry research report provides comprehensive data (region-wise segment analysis), with forecasts and estimates in "USD million" for the period 2026-2030, as well as historical data from 2020-2024 for the following segments.

- Component

- GPU

- ASIC

- CPU

- FPGA

- Type

- HBM

- DDR

- Application

- Machine learning models

- Generative AI

- Natural language processing

- Computer vision

- Deployment

- Cloud

- Edge

- Geography

- North America

- US

- Canada

- Mexico

- APAC

- China

- Japan

- India

- Europe

- Germany

- UK

- France

- South America

- Brazil

- Colombia

- Argentina

- Middle East and Africa

- Saudi Arabia

- UAE

- South Africa

- Rest of World (ROW)

- North America

By Component Insights

The gpu segment is estimated to witness significant growth during the forecast period.

The segment for graphics processing units remains the cornerstone of the AI inference-as-a-service market, providing the foundational hardware for GPU accelerated computing.

This dominance is due to their parallel processing capabilities, which are uniquely suited for handling increasing AI model complexity. As organizations demand real-time AI insights, these components are critical for scalable AI infrastructure, especially for complex transformer architectures.

AI inference platforms leverage these AI accelerators to enable low-latency inference in cloud deployment environments. While specialized hardware like the tensor processing unit is emerging, the versatility of GPUs in handling diverse workloads ensures their central role.

This is demonstrated in deployments where confidential computing has been integrated, with companies reporting a 20% improvement in secure data throughput without compromising performance. Their adaptability ensures they remain vital for AI optimization techniques.

The GPU segment was valued at USD 42.28 billion in 2024 and showed a gradual increase during the forecast period.

Regional Analysis

North America is estimated to contribute 41.1% to the growth of the global market during the forecast period.Technavio’s analysts have elaborately explained the regional trends and drivers that shape the market during the forecast period.

See How AI Inference-as-a-service Market Demand is Rising in North America Request Free Sample

The geographic landscape is characterized by distinct regional priorities. North America leads in large-scale cloud infrastructure, leveraging AI hardware innovation for hyperscale data centers. Europe emphasizes data privacy in AI services and sovereign capabilities, driving adoption of hybrid models.

Meanwhile, APAC is a hub for on-device intelligence and edge computing, driven by its mobile-first economies and semiconductor manufacturing prowess. This region has seen a 30% increase in the deployment of real-time processing for logistics and smart city applications.

Specialized hardware, including deep learning workstations and FPGAs for low-latency AI, is gaining traction globally for applications like natural language processing.

Innovations such as the wafer-scale engine and advanced silicon interposer technology are enabling more cost-efficient AI deployment for complex multi modal systems, addressing diverse regional demands for both centralized and distributed intelligence.

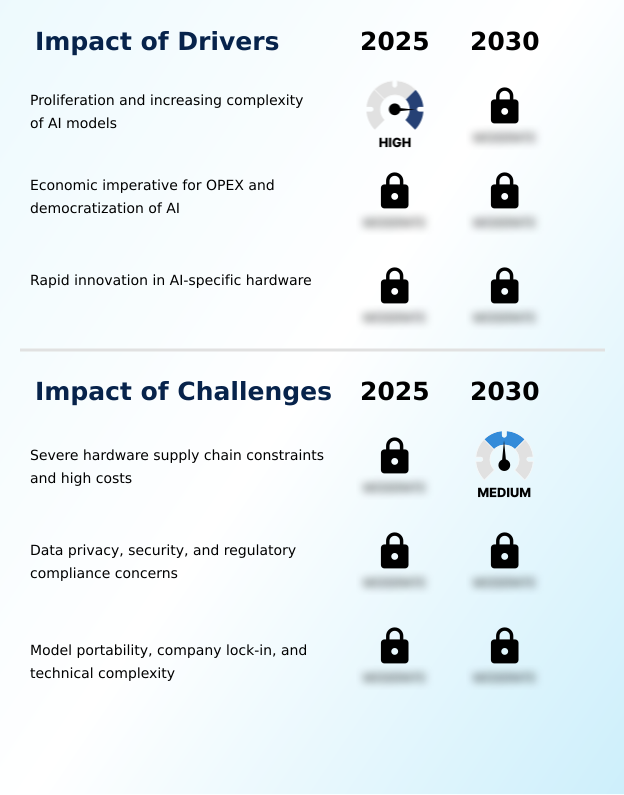

Market Dynamics

Our researchers analyzed the data with 2025 as the base year, along with the key drivers, trends, and challenges. A holistic analysis of drivers will help companies refine their marketing strategies to gain a competitive advantage.

- The AI inference-as-a-service market is shaped by critical technical and economic considerations. The central challenge revolves around the cost of running large language models, which is driving intense focus on optimizing generative AI for low latency and high throughput. A key debate involves the gpu vs asic for AI inference, where general-purpose flexibility is weighed against specialized efficiency.

- This has led to an exploration of multi-cloud AI inference deployment benefits, allowing enterprises to avoid lock-in and leverage unique hardware advantages from different providers, improving workload resilience by over 20% compared to single-provider strategies. However, concerns around data security in third party AI services are paramount, pushing the adoption of confidential computing for secure AI inference.

- Serverless inference for unpredictable traffic offers a solution by abstracting infrastructure management, and developers are learning how serverless inference simplifies AI deployment. Still, the challenges of AI model portability lock-in persist. Addressing AI inference hardware supply chain issues is crucial for providers.

- The benefits of HBM in AI accelerators are clear for memory-bound tasks, while reducing AI inference costs with quantization is a common software optimization. As enterprises focus on deploying machine learning models at scale, they must consider the generative AI impact on compute resources and plan for natural language processing API integration.

- Meanwhile, edge computing for real-time computer vision is enabling new applications in computer vision for industrial automation. The choice between cloud deployment for scalable AI models and edge deployment for low-latency AI, alongside managing AI inference in hybrid cloud, defines the modern architectural playbook.

What are the key market drivers leading to the rise in the adoption of AI Inference-as-a-service Industry?

- The proliferation and increasing complexity of AI models requiring massive computational power act as a primary catalyst for the market.

- Market growth is fundamentally driven by the escalating complexity of AI models and the economic imperative to shift to an OPEX model for AI.

- The computational demands of generative AI applications and advanced computer vision systems necessitate access to massive, liquid cooled server farms that are beyond the reach of most organizations. This dynamic fuels the need for on-demand services.

- Concurrently, rapid innovation in hardware, particularly the development of the application specific integrated circuit (ASIC) for AI inference, is making machine learning deployment more efficient. Leading providers have demonstrated that custom ASICs can improve performance-per-watt by 3x for specific tasks.

- This progress in AI inference optimization, alongside software techniques like model quantization and knowledge distillation, lowers the barrier to entry and expands the market's reach, despite ongoing challenges in the hardware supply chain for AI.

What are the market trends shaping the AI Inference-as-a-service Industry?

- The rise of serverless inference and the development of higher-level abstractions are dominant trends, simplifying the deployment process for software developers.

- Key market trends are centered on simplifying deployment and improving efficiency. The adoption of serverless inference is accelerating, with platforms now offering sophisticated AI inference APIs that abstract away infrastructure complexities for machine learning models.

- This trend is coupled with a move toward hybrid cloud and multi-cloud strategies, which addresses concerns over AI model portability and vendor lock-in; firms adopting a hybrid cloud AI strategy report a 25% improvement in deployment flexibility. The debate over cloud vs edge inference continues, with many opting for a balanced approach.

- Furthermore, optimization is critical, with techniques like model pruning and a focus on green computing becoming standard for AI model serving. These efficiency gains have reduced the energy consumption for some NLP as a service workloads by up to 15%, making generative AI more sustainable.

What challenges does the AI Inference-as-a-service Industry face during its growth?

- Severe hardware supply chain constraints and high capital costs for advanced semiconductors represent foundational barriers currently impacting the market.

- Significant challenges constrain the market, primarily centered on hardware scarcity and security concerns. Severe supply chain limitations for critical components like high bandwidth memory (HBM) for large language models and advanced AI accelerators create bottlenecks, hindering cost-efficient AI deployment. This is compounded by the high cost of custom silicon for AI, including wafer-scale engine and reconfigurable dataflow unit technologies.

- Furthermore, data privacy in AI services remains a primary barrier, as enterprises hesitate to move sensitive workloads to third-party clouds. This has slowed the adoption of some computer vision APIs for regulated industries by an estimated 20%. While edge deployment offers a partial solution, it presents its own complexities in managing distributed systems.

- Balancing performance, cost, and security is an ongoing struggle, even as new hardware with specialized tensor cores and efficient CPU for AI workloads becomes available.

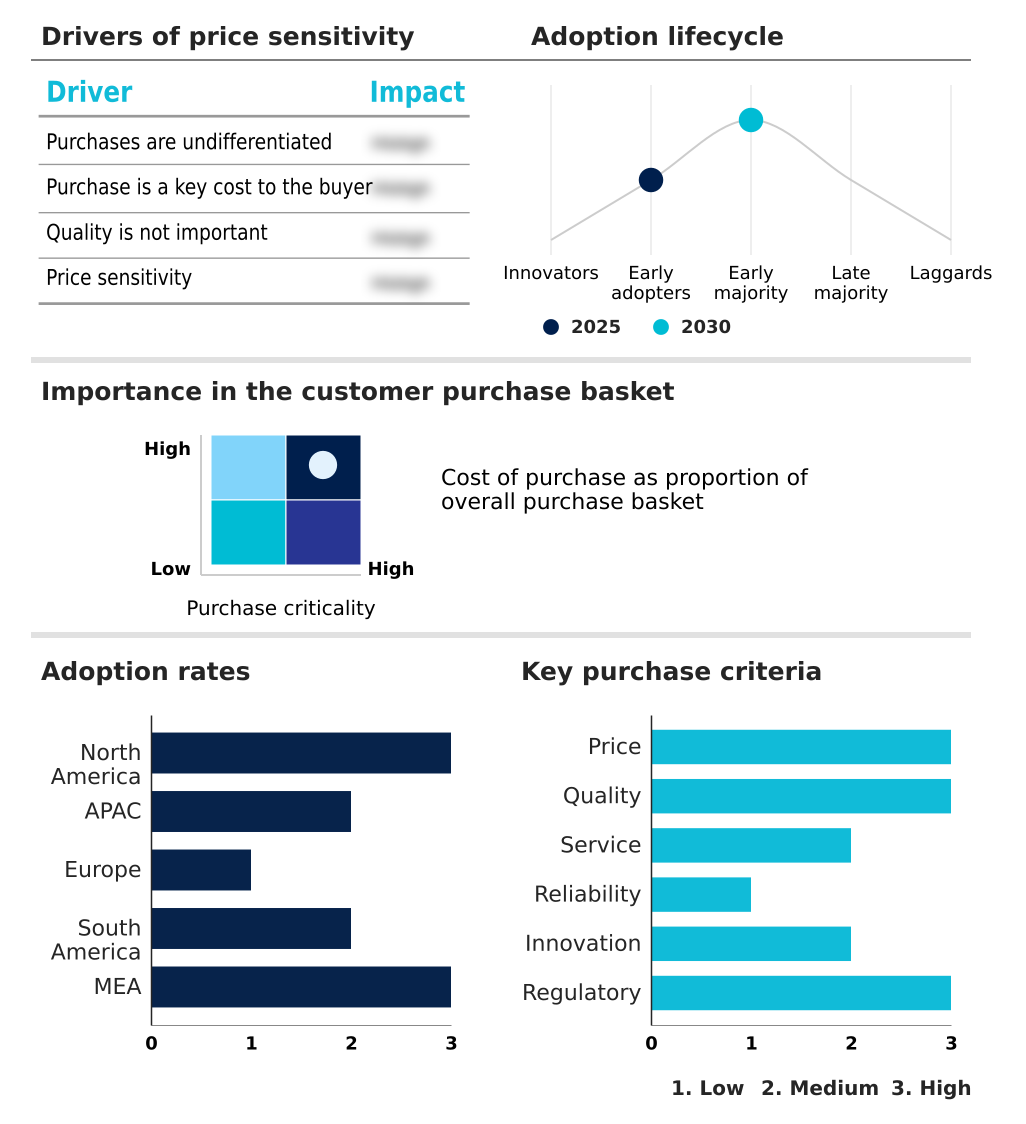

Exclusive Technavio Analysis on Customer Landscape

The ai inference-as-a-service market forecasting report includes the adoption lifecycle of the market, covering from the innovator’s stage to the laggard’s stage. It focuses on adoption rates in different regions based on penetration. Furthermore, the ai inference-as-a-service market report also includes key purchase criteria and drivers of price sensitivity to help companies evaluate and develop their market growth analysis strategies.

Customer Landscape of AI Inference-as-a-service Industry

Competitive Landscape

Companies are implementing various strategies, such as strategic alliances, ai inference-as-a-service market forecast, partnerships, mergers and acquisitions, geographical expansion, and product/service launches, to enhance their presence in the industry.

Amazon.com Inc. - Provides a serverless platform enabling scalable deployment of machine learning models with production-ready infrastructure, low-latency APIs, and integrated observability tools.

The industry research and growth report includes detailed analyses of the competitive landscape of the market and information about key companies, including:

- Amazon.com Inc.

- Baseten

- BentoML

- Cerebras Systems Inc.

- CoreWeave Inc

- Databricks Inc.

- Deep Infra Inc.

- DigitalOcean Holdings Inc.

- Fireworks AI Inc.

- Google LLC

- Groq Inc.

- Hugging Face Inc.

- Lambda Labs Inc.

- Microsoft Corp.

- Modal Labs Inc.

- Nebius Group N.V

- NVIDIA Corp.

- Replicate Inc.

- RunPod Inc.

- SambaNova Systems Inc.

Qualitative and quantitative analysis of companies has been conducted to help clients understand the wider business environment as well as the strengths and weaknesses of key industry players. Data is qualitatively analyzed to categorize companies as pure play, category-focused, industry-focused, and diversified; it is quantitatively analyzed to categorize companies as dominant, leading, strong, tentative, and weak.

Recent Development and News in Ai inference-as-a-service market

- In September, 2024, CoreWeave Inc. announced a strategic partnership with a leading AI framework developer to provide optimized, full-stack solutions for large-scale generative AI model training and inference, enhancing performance by up to 25% on its specialized GPU cloud.

- In November, 2024, Groq Inc. secured USD 300 million in a Series D funding round to scale production of its Language Processing Unit (LPU) and expand its cloud-based inference services to meet growing demand for real-time AI applications.

- In February, 2025, Google LLC launched its next-generation Tensor Processing Unit (TPU) v6, available on Google Cloud, promising a 2x performance-per-dollar improvement for inference workloads and introducing new features for efficient multi-modal model serving.

- In April, 2025, Amazon.com Inc. expanded its AI inference capabilities by opening three new AWS regions in Southeast Asia, specifically designed with its custom Inferentia2 and Trainium accelerators to offer lower latency and data sovereignty for customers in APAC.

Dive into Technavio’s robust research methodology, blending expert interviews, extensive data synthesis, and validated models for unparalleled AI Inference-as-a-service Market insights. See full methodology.

| Market Scope | |

|---|---|

| Page number | 316 |

| Base year | 2025 |

| Historic period | 2020-2024 |

| Forecast period | 2026-2030 |

| Growth momentum & CAGR | Accelerate at a CAGR of 22.1% |

| Market growth 2026-2030 | USD 146117.2 million |

| Market structure | Fragmented |

| YoY growth 2025-2026(%) | 18.8% |

| Key countries | US, Canada, Mexico, China, Japan, India, South Korea, Australia, Indonesia, Germany, UK, France, Italy, Spain, The Netherlands, Brazil, Colombia, Argentina, Saudi Arabia, UAE, South Africa, Israel and Turkey |

| Competitive landscape | Leading Companies, Market Positioning of Companies, Competitive Strategies, and Industry Risks |

Research Analyst Overview

- The AI inference-as-a-service market is evolving from a niche capability to a foundational enterprise utility, driven by the operational need for real-time processing. This shift requires organizations to navigate a complex landscape of hardware and software, including high-performance graphics processing units and specialized field programmable gate array options.

- The economics of deployment are fundamentally changing, with the adoption of serverless inference and model quantization allowing companies to manage costs while handling complex neural network math. For example, firms leveraging knowledge distillation have reported the ability to run models on hardware with 40% less memory without significant accuracy loss.

- This efficiency is critical for deploying everything from generative AI to computer vision applications. Boardroom decisions are increasingly influenced by the choice between cloud deployment and edge deployment, which impacts data governance and responsiveness.

- The rise of multi modal systems and complex transformer architectures makes access to platforms offering high bandwidth memory and reconfigurable dataflow unit technology a competitive necessity for achieving low-latency inference.

What are the Key Data Covered in this AI Inference-as-a-service Market Research and Growth Report?

-

What is the expected growth of the AI Inference-as-a-service Market between 2026 and 2030?

-

USD 146.12 billion, at a CAGR of 22.1%

-

-

What segmentation does the market report cover?

-

The report is segmented by Component (GPU, ASIC, CPU, and FPGA), Type (HBM, and DDR), Application (Machine learning models, Generative AI, Natural language processing, and Computer vision), Deployment (Cloud, and Edge) and Geography (North America, APAC, Europe, South America, Middle East and Africa)

-

-

Which regions are analyzed in the report?

-

North America, APAC, Europe, South America and Middle East and Africa

-

-

What are the key growth drivers and market challenges?

-

Proliferation and increasing complexity of AI models, Severe hardware supply chain constraints and high costs

-

-

Who are the major players in the AI Inference-as-a-service Market?

-

Amazon.com Inc., Baseten, BentoML, Cerebras Systems Inc., CoreWeave Inc, Databricks Inc., Deep Infra Inc., DigitalOcean Holdings Inc., Fireworks AI Inc., Google LLC, Groq Inc., Hugging Face Inc., Lambda Labs Inc., Microsoft Corp., Modal Labs Inc., Nebius Group N.V, NVIDIA Corp., Replicate Inc., RunPod Inc. and SambaNova Systems Inc.

-

Market Research Insights

- The market is defined by a dynamic interplay of hardware innovation and economic imperatives. The adoption of an OPEX model for AI has been shown to reduce total cost of ownership by up to 40% for startups, democratizing access to high-end computing. This shift is fueling demand for scalable AI infrastructure and versatile AI inference platforms.

- At the same time, AI hardware innovation is constant, with custom silicon for AI delivering a 3x performance increase over previous generation hardware for specific AI model serving tasks. This competitive environment benefits end-users seeking cost-efficient AI deployment and real-time AI insights.

- However, navigating choices between cloud vs edge inference remains a key strategic decision, with on-device intelligence adoption growing by 25% in sectors where data privacy is paramount. AI workload orchestration is therefore critical for managing these distributed systems effectively.

We can help! Our analysts can customize this ai inference-as-a-service market research report to meet your requirements.

RIA -

RIA -